I’ve watched my friend Sarah roll her eyes at ChatGPT, dismiss Gemini as “glorified autocomplete,” and refuse to touch any AI writing assistant on principle. She’s a researcher who’s been burned too many times by confident-sounding nonsense. Last month, I caught her using NotebookLM to analyze interview transcripts. When I pointed out she was using AI, she paused and said, “This is different. It doesn’t make things up.”

She’s right. NotebookLM works because it doesn’t pretend to know everything—it only knows what you explicitly feed it. That constraint, which sounds limiting, is actually why it succeeds where flashier AI tools fail. It’s trustworthy in a way that unrestricted AI simply can’t be.

Why AI skeptics actually have a point

The hallucination problem isn’t minor

The skepticism around AI tools is completely justified. When you ask ChatGPT or Claude about your project, they’ll confidently reference documents they’ve never seen and cite sources that sometimes don’t exist. For anyone doing serious work (research, legal analysis, business strategy), that’s pretty disqualifying.

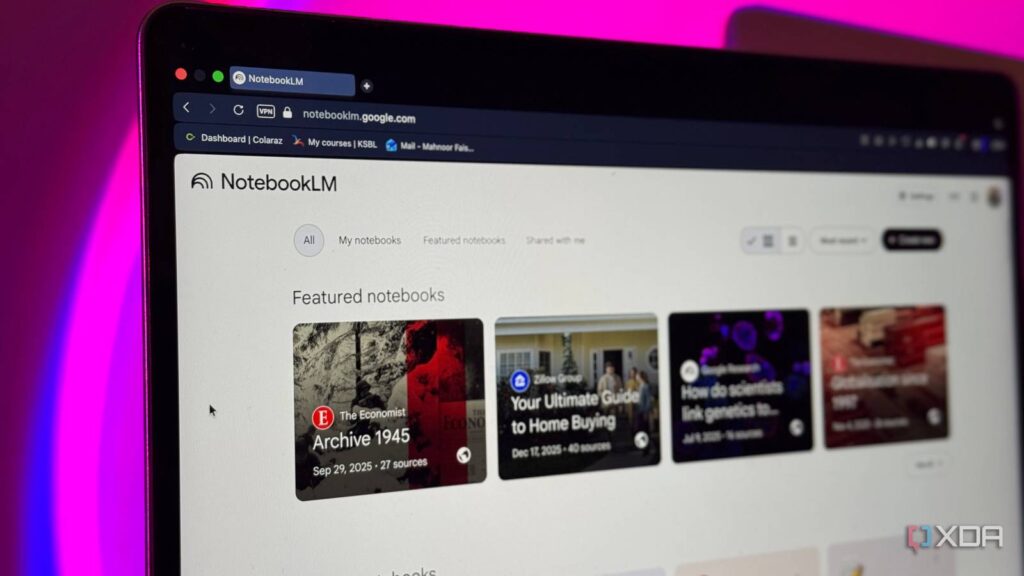

NotebookLM sidesteps this entirely. Upload your research papers, meeting notes, or project documents, and it will only reference what’s actually there. Ask it about something outside your uploaded sources, and it tells you it doesn’t have that information. No hallucinations. No invented citations. Just your material, reorganized and synthesized.

Until NotebookLM, I never believed AI could be this game-changing for productivity

It transformed my view of AI, for the better.

Research synthesis that actually cites its sources

When you need to trust the references

This is where NotebookLM becomes indispensable. I uploaded fifteen academic papers on attention mechanisms for a technical article I was writing. Instead of skimming abstracts and hoping I caught the important bits, I asked NotebookLM to map out how different papers approached the same problem.

It generated a comparative analysis with inline citations showing exactly which paper each claim came from. When I needed to verify something, I could click through to the source. The time saved was significant, but more importantly, I trusted the output enough to actually use it.

Traditional AI tools would have given me a confident-sounding summary mixing actual research with plausible-sounding fabrications. NotebookLM just told me what my sources said.

Meeting notes that become action plans

It connects scattered information

I uploaded a month’s worth of meeting notes, such as team syncs, client calls, and strategy sessions, into a single NotebookLM notebook. The notes were scattered across Google Docs, fragmented and inconsistent. I asked it to identify recurring action items that hadn’t been completed.

It pulled out five initiatives that kept appearing across different meetings but never got addressed. Each one included context from multiple conversations, showing how the discussion evolved. This isn’t something you’d catch by manually scanning documents, as the connections only become visible when something processes all the material simultaneously.

What makes NotebookLM work here is that it’s working with your actual notes. It’s not inventing priorities or hallucinating tasks. It’s pattern-matching across your real information.

Learning new domains without the fluff

When you need density, not breadth

The third use case is probably the most underrated: using NotebookLM to learn from dense material. I uploaded a technical document. Think 200 pages of dry, jargon-heavy content. Instead of reading linearly, I asked targeted questions as they came up during implementation.

“How does this system handle concurrent writes?” NotebookLM pointed me to the exact section with the locking mechanism details. “What are the performance implications of indexing this way?” It synthesized information from three different chapters that each addressed part of the answer.

This works because NotebookLM isn’t padding responses with general knowledge. It’s not telling me how databases generally work. It’s telling me how this specific system works, based on the documentation I provided.

Why the constraint is a feature

NotebookLM succeeds because it’s designed around a limitation

It can’t browse the internet, doesn’t have opinions about current events, and won’t write you a sonnet. It just processes what you give it. For AI skeptics who’ve been burned by overconfident tools, that narrow focus is exactly what makes it trustworthy.

My friend Sarah still doesn’t think of herself as someone who uses AI. But she uses NotebookLM daily because it does one thing exceptionally well: it makes her own information more accessible without adding noise. Sometimes the best tool is the one that knows its limits.