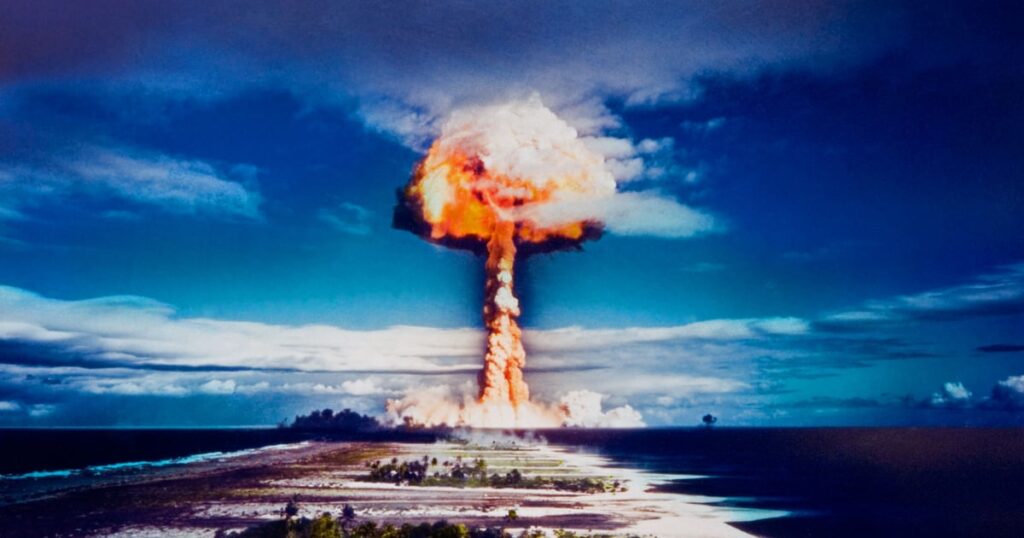

BLACK HAT ASIA – Singapore – The emergence of large language models (LLM) like Anthropic’s Mythos and, this week, OpenAI’s GPT-5.5, has set the security world a twitter with dark speculation that we are entering an era of industrialized, autonomous, mass exploitation across any platform or infrastructure — a nuclear threat that no organization, anywhere, can hide from.

But not so fast, argues RunSybil CEO Ari Herbert-Voss: while defenders need to change their risk calculus to prepare for ever-accelerating threats from AI, the limits of human effort still matter when it comes to how successful those threats become; and it’s a teachable moment for the security industry.

“What we’re seeing with LLMs is what we saw with fuzzers in the 2000s; fuzzing was supposed to change everything,” says Herbert-Voss, who was the first security hire at OpenAI, where he led the red team engagements for the GPT3 and Codex model releases. “A non-human could find crashes at scale, quickly, automatically. People thought it would make vuln researchers irrelevant, and trigger a flood of zero-days like the industry had never seen. Some of that happened in small ways, but fuzzing created a new problem, which is a deluge of possible bugs.”

In other words, someone still had to sort through the flaws, identify the exploitable crashes, and figure out what caused the bug to be introduced in the first place.

“In a way, fuzzing made vuln researchers more valuable,” he tells Dark Reading.

In the same way, LLMs have the ability to automatically generate massive datasets, confirm something is wrong, and provide ways to offensively exploit that wrongness, he explained during his keynote on Friday at Black Hat Asia in Singapore. But knowing something is wrong and knowing what to do about it are different problems. And this, he says in an interview, highlights areas where human expertise remains not just necessary but crucial, for both attackers and defenders.

“I’ve said it once and I will say it again: The capability ceiling is rising fast,” he explains. “The capability floor is not keeping pace. Teams can generate more possible bugs than ever before. Validating which ones have real security impact still requires a human. That gap is the problem.”

Long Way to Go Before Cyberattack ICBMs Launch

Autonomous performance across offensive tasks is improving by leaps and bounds, that much is true, Herber-Voss acknowledged during his talk.

“One of the most important things that’s happening right now is what we call the scaling hypothesis,” he said during the keynote. “More [training] data plus more compute power plus more parameters means better performance across a variety of tasks, and this has held surprisingly well over the last seven-plus years. What has happened recently is that capabilities are scaling super-linearly, rather than linearly: When you train a model that is twice as big, for twice as long, on twice as much data, you can get a model that’s four times as capable. This is the difference between the last generation of models and this latest generation.”

Indeed, he points out that between 2023 and 2026, the average time to from discovery of a bug to its exploitation dropped from five months to 10 hours.

“‘Shifting left’ is more important than ever, as it will soon become the case that organizations simply won’t be able to ship bugs without those bugs being found and used in short order,” he says. “We’re seeing this play out in professional capture-the-flag (CTF) competitions already, where challenges that previously took teams hours are now being solved in minutes of going live by CTF players and a couple agentic coding tools.”

However, LLM-based offensive improvements vary across different classes of vulnerabilities. Mythos has achieved “massive gains” when it comes to finding and exploiting low severity “shallow bugs,” he noted in the keynote; modest gains for mid-tier bugs; and relatively sparse gains for the most severe. Humans still need to do a large amount of filtering and validation to reap the benefits of accelerated bug discovery.

“A good example of progress is multistep attack execution: recent evaluations of Anthropic’s Mythos by the UK AI Security Institute show models can carry out long offensive workflows autonomously in controlled environments, completing a substantial portion of attack chains,” he tells Dark Reading. “This is something earlier models couldn’t do. However, the boundary is still clear: These systems are not reliably consistent on real-world targets.”

In other words, when it comes to meaningfully assessing the impact of a vulnerability, models do not guarantee that those findings are really worth the time, he explained from the stage. “Individual attackers seem to get lucky when they rely on models to find exploits, but many iterations are required if you want to uncover specific impacts on specific targets and topics,” he explained. “Recent experiments with Mythos still boiled down to there being 198 human review findings that sit behind a much larger pool of automated data points.”

In practice though, this still represents a big challenge for organizations. “Defenders are unfortunately going to get hit by millions of monkeys with typewriters, and some of those monkeys will write very good exploits and some won’t,” he said. “Even so, defenders are going to have to react every time [when bugs are found], whereas attackers will only have to get lucky every few months.”

Avoiding Mutual Assured (Cyber) Destruction

Autonomous offensive systems can now chain exploits, perform reconnaissance, and adapt mid-engagement. “Engineering departments need budget, education, and access to make them AI-native,” Herbert-Voss says. “Figure out what are the things it makes sense for your company/org to build yourselves, and figure out what are the things it makes sense to outsource/buy. However, there is a snake oil problem: Every company claims to be using ‘AI’ in some fashion with catchy buzz words and promises. Security leaders must hold them accountable for their claims.”

In all, there are four key technical advances to lean into as defenders, Herbert-Voss outlines:

-

Improved reasoning. This is the most important underlying part of this, he says: “So much of security involves deep reasoning. How does this work? How could it break? If I do X and Y happens, what does that imply?”

-

Improved tool calling. “You can theorize about ways a system could break all day, but to actually find vulnerabilities, agents need to be able to use tools that let them interact with the real world,” Herbert-Voss says. “Agents are now way better at understanding how and when to use what tools to prove vulnerabilities exist.”

-

Quality “harness” engineering. “Agents have a limited context window,” he explains. “They need to be given access to the right context for the right scope with the right tools. Over time we’ve continuously refined this to ensure we’re setting agents up for success and not expecting them to do the impossible.”

-

Building the right systems around the harness. “A single agent with a great harness can only do so much,” he explains. “Success in this industry requires multiple agents working together, and you need to build the right systems to enable effective agent-to-agent communication.”

In all, the pace of vulnerability discovery by good and bad actors is inevitably going to get faster, and the accessibility of so-called “frontier models” is going to continue to increase. Herbert-Voss believes this is actually a positive development.

“There are extreme economic pressures in the AI industry to broaden access to these capabilities, and that is true for both good and bad use cases,” he concluded on Friday. “There’s a lot of concern over how fast things are moving, and it’s something we definitely need to be paying attention to, but I think that there’s also just a lot more opportunity to focus on building multilayer defenses and patching, and using this energy and this momentum to do a lot of the things that we probably should have just been doing in the first place.”