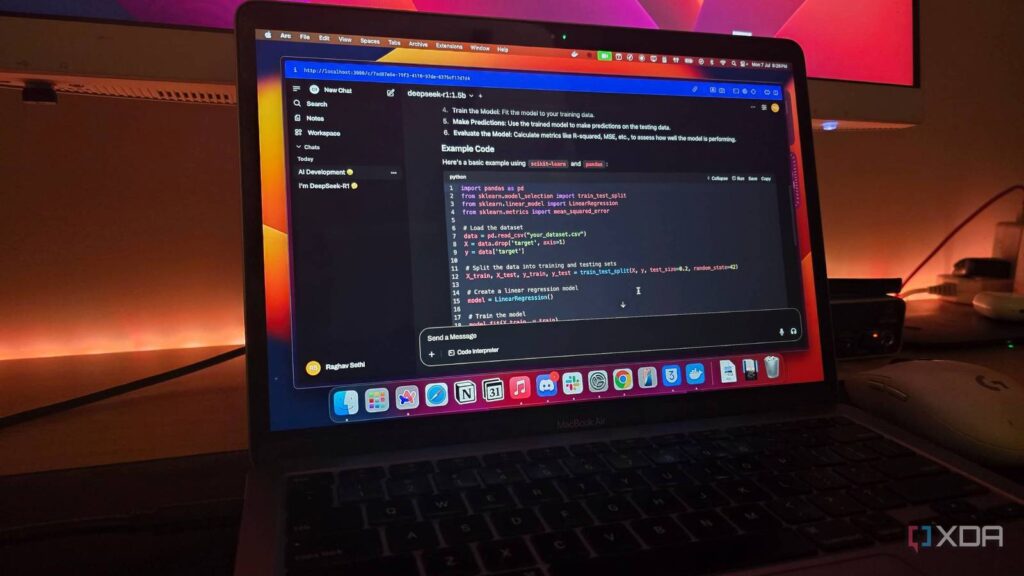

Running a local LLM seems straightforward. You install Ollama, pull a model, and start chatting. But if you’ve spent any time actually using one, you’ve probably noticed that the default experience isn’t always great. Responses can feel repetitive, context seems to get lost, and in extreme cases, models might get caught in an infinite loop. More often than not, the difference between a frustrating local setup and one that actually feels good comes down to settings that most people never bother touching.

I’ve been tweaking these parameters across Ollama, Open WebUI, LM Studio, and raw llama.cpp for a while now, and there are a few that I think everyone running a local model should understand. You don’t need to memorize every sampling algorithm, but knowing what these do and when to change them makes a real difference. Often, model providers will publish the values these parameters should be set to.

Temperature controls how creative or predictable your model is

It’s the first thing most people change, and for good reason

Temperature is probably the most well-known LLM parameter, and it’s the one you’ll adjust most often. What it actually does is scale the logits, which is the raw probability scores the model assigns to each possible next token, before they’re converted into a probability distribution via the softmax function. A lower temperature (like 0.1 or 0.2) sharpens that distribution, making the model heavily favor the most probable token. A higher temperature (0.8 or above) flattens it, giving less likely tokens a better shot at being selected.

For factual questions, code generation, or anything where accuracy matters, keep it low. For creative writing, brainstorming, or conversational use, turn it up. The default in most tools sits around 0.7 or 0.8, which is a reasonable middle ground, but I find myself dropping it to 0.3 or 0.4 for some things. If the output feels robotic, nudge it up. If it starts hallucinating or going off track, pull it back down.

What’s worth understanding is how temperature interacts with the other sampling parameters. Temperature is applied first, before top-k, top-p, or min-p filtering kicks in. That means a high temperature doesn’t just make the model more random on its own, but it also changes which tokens survive the subsequent filtering steps. A temperature of 1.5 with min-p 0.1 produces very different output than a temperature of 0.5 with the same min-p, because the probability distribution the filter sees has been reshaped entirely.

Min-p can be a better alternative to top-p and top-k in some setups

Though not always

Top-k and top-p have been the standard sampling parameters for years. Top-k limits the model to only consider the k most probable next tokens (so top-k of 40 means it picks from the 40 most likely options). Top-p, also called nucleus sampling, takes a different approach, as it considers the smallest set of tokens whose combined probability exceeds your threshold. In other words, top-p of 0.9 means it considers tokens until their probabilities add up to 90%.

Both work, but they share the same problem: they apply a fixed cutoff regardless of how confident the model is. If the model is 95% sure of the next word, top-p 0.9 still lets through a bunch of unlikely candidates. If the model is uncertain, top-k 40 might cut off perfectly reasonable options. You end up constantly trading off between quality in high-confidence situations and diversity in low-confidence ones, and neither feels quite right.

Min-p fixes this by scaling dynamically with the model’s confidence. It sets a minimum probability threshold relative to the top token. If you set min-p to 0.1 and the top token has a 50% probability, only tokens with at least 5% probability (50% x 0.1) make the cut. When the model is confident, the filter is tight. When it’s uncertain, the filter relaxes. In practice, you get noticeably more coherent output, especially at higher temperatures where top-p tends to fall apart.

With top-p, the number of candidate tokens can swing wildly depending on the probability distribution; sometimes you get five candidates, sometimes you get 500. Min-p keeps the candidate pool proportional to the model’s actual certainty, which means temperature becomes a much more predictable control. You can run higher temperatures without the output going off the rails, because min-p is dynamically tightening the filter as needed.

If your tool supports it (Ollama, llama.cpp, and LM Studio all do), try setting min-p to somewhere between 0.05 and 0.1 and compare it against your usual top-p/top-k settings.

Context length determines how much the model can “see”

And Ollama’s old default was definitely too short

The “num_ctx” parameter controls how many tokens the model holds in its context window. This includes your system prompt, the conversation history, and the current response. Once you exceed it, many local LLM apps and backends start truncating older tokens from the prompt. By default, there’s no warning or error, and the model just quietly loses access to the beginning of your conversation. Some applications like LM Studio have functions like stopAtLimit, truncateMiddle, and rollingWindow, but not all harnesses do. And there are tools like Yet another RoPE extensioN (YaRN) that try to extend this context window that can be bolted on, but we’re focusing on the vanilla context window used by most local models here.

Ollama used to default to 2048 tokens, which is surprisingly low. For a quick question-and-answer exchange, that’s fine. If you keep in mind that a token is very roughly half a word, you’ll realize that token length isn’t going to be capable of ingesting a document, having a multi-turn conversation, or working through a longer problem. The model will start losing track of what you said earlier, contradicting itself, or ignoring instructions from your system prompt. I’ve seen a lot of people blame model intelligence for these problems, when in actuality, I suspect it’s their context window that was the problem.

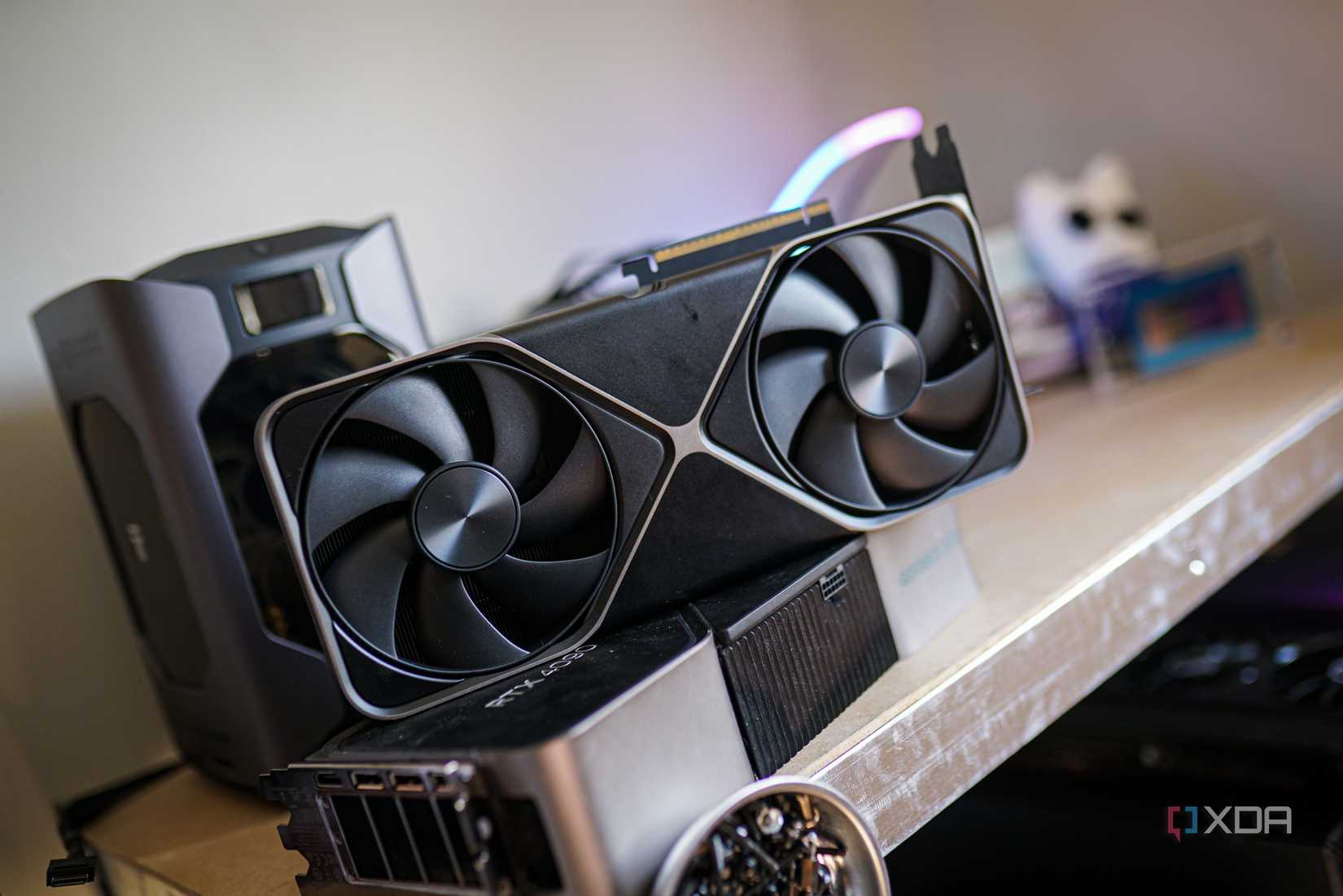

You can increase it by looking for num_ctx or context size in your provider settings, and the method of doing it will differ depending on the provider you’re using. But here’s the catch: context length eats a lot of VRAM. The KV cache (more on that in a bit) scales linearly with context length, and for a typical 8B parameter model at FP16, each additional 4K tokens of context costs anywhere from a few hundred megabytes to 1 GB of VRAM. At 32K context, you might be spending more VRAM on the KV cache than on the model weights themselves.

There’s also a performance dimension. Attention computation scales quadratically with sequence length in standard transformer architectures (though flash attention helps, which we’ll get to as well). So doubling your context from 8K to 16K doesn’t just double your VRAM usage, it also increases the time per token for processing the full context. If you’re running on a consumer GPU with 8 or 12 GB of VRAM, you’ll need to find a balance between context size and model size, and KV cache quantization can help stretch that further.

KV cache quantization saves VRAM without killing quality

This is probably the most underrated setting for consumer GPUs

Every token in your context window gets stored in what’s called the KV (key-value) cache. This is where the model stores its computed attention keys and values for each token so it doesn’t have to recompute them on every generation step. By default, this cache uses FP16 precision, and it grows linearly with your context length. Each layer of the model maintains its own KV cache, so for a 32-layer model with 32K context, you’re looking at a lot of stored data. On long conversations, the KV cache alone can eat several gigabytes of VRAM.

KV cache quantization compresses this cache to a lower precision. At Q8_0 (8-bit), you roughly halve the VRAM required for context with almost no perceptible quality loss in most models. One benchmark showed that it added 0.002 to 0.05 perplexity to Qwen2.5-Coder, which is basically nothing. The reason the quality hit is so small is that the KV cache stores intermediate attention representations, not model weights, and these representations are more tolerant of reduced precision than the weights themselves.

If you’re more VRAM-constrained, Q4_0 (4-bit) cuts the cache to about a quarter of the original size, but there’s a real quality tradeoff. It adds around 0.2 to 0.25 perplexity, which you will notice on tasks that require precision, like coding or factual queries. The degradation tends to show up as subtle context-tracking errors, and the model might lose the thread of a specific instruction or confuse details from earlier in the conversation. For creative writing or casual chat, though, it’s still perfectly usable.

In Ollama, you can enable this with the OLLAMA_KV_CACHE_TYPE environment variable. In llama.cpp, use the –cache-type-k and –cache-type-v flags (and yes, you can quantize keys and values separately, and some people run Q8 keys with Q4 values as a compromise). If you’re running 16K or 32K context on a 12 GB GPU, this is often the difference between being able to fit the model entirely in VRAM or not.

Repetition and presence penalties stop the model from looping

They sound similar, but they work differently

These two parameters both fight repetition, but they do it in distinct ways, and understanding the difference matters if you want to tune them properly.

Repetition penalty is the older and more widely supported of the two. It applies a multiplicative penalty to the logits of any token that has appeared within the last n tokens (controlled by repeat_last_n). A value of 1.0 means no penalty, and values above 1.0 reduce the probability of recently seen tokens. Most people find a sweet spot between 1.05 and 1.15. Go too high and the model starts awkwardly avoiding common words like “the” and “is,” producing text that reads like it’s trying too hard to use a thesaurus. Go too low and you’ll see the same phrases cycling back in.

Presence penalty works differently. Instead of scaling with how recently a token appeared, it applies a flat, additive penalty to any token that has appeared anywhere in the preceding text, regardless of how many times. A token that appeared once gets the same penalty as one that appeared fifty times. This makes it more about topic diversity than repetition avoidance. It nudges the model to introduce new vocabulary and ideas rather than recycling what it’s already said. Typical values range from 0.0 to 0.5.

There’s also frequency penalty, which is the third member of this family in llama.cpp. It scales the penalty proportionally to how many times a token has appeared; a word used ten times gets penalized more than one used twice. This is useful for long-form generation where certain words naturally recur but you still want the model to gradually shift its vocabulary.

All three are applied before sampling truncation (top-k, top-p, min-p), which means penalized tokens get deprioritized before the probability redistribution happens. You can combine them, but start with one at a time. For example, Qwen3.5-35B-A3B’s official guidance when run in thinking mode for general tasks is to set a presence_penalty of 1.5 and a repetition_penalty of 1.0.

Chat templates control how your model interprets messages

Get this wrong and the model won’t follow instructions at all

This is one of those settings that most people never think about, but it can completely change the quality of your model’s output. A chat template defines how the raw conversation, like your system prompt, user messages, and assistant responses, gets formatted into the actual token sequence the model sees. Different models were trained with different template formats, and using the wrong one is like speaking to someone in a language they only half understand.

Most models you’ll pull from Ollama have their chat template baked into the model metadata, so it’s handled automatically. But if you’re loading a model manually in llama.cpp or LM Studio, or using a GGUF you downloaded from Hugging Face, you might need to set it or modify it yourself. The most common formats are ChatML (used by the likes of Qwen, Yi, and Mistral variants), Llama 3’s format (which uses and markers), and Alpaca-style instruction formatting.

In llama.cpp, you can specify the template with the –chat-template flag. In Ollama, the TEMPLATE instruction in your Modelfile uses Go template syntax to define how messages are structured. If you’re building a custom Modelfile, getting this right is important, as a malformed template can cause the model to ignore your system prompt entirely, or worse, treat your user messages as part of the assistant’s response.

On top of that, newer reasoning models like Qwen3 and GLM-4 support template kwargs that control model behavior at the template level. The most common one is “enable_thinking”, which toggles whether the model produces chain-of-thought reasoning before its answer. In llama.cpp, you pass this with –chat-template-kwargs ‘{“enable_thinking”: false}’, though this differs by model. This is worth knowing because thinking mode can seriously slow down inference. Often, the model generates hundreds of reasoning tokens before the actual response, and for straightforward tasks, you simply don’t need it. Disabling it can cut your time-to-first-token by a lot without hurting output quality on simpler prompts.

Flash attention is free performance you should turn on

If your setup supports it, there’s no reason not to

Flash attention is an optimization for how the model computes attention over the context window. Standard attention computes a full N x N attention matrix, which is both memory-intensive and slow for long sequences. Flash attention restructures this computation to work in tiles, avoiding the need to materialize the full matrix in memory. That means lower VRAM usage and faster computation, especially at longer context lengths.

It’s supported by most modern models in llama.cpp-based tools. In Ollama, you can enable it with the OLLAMA_FLASH_ATTENTION environment variable. In llama.cpp directly, use the –flash-attn flag. On Nvidia GPUs, it uses the cuDNN flash attention kernels. On AMD, ROCm has its own implementation.

The gains are most noticeable with longer context windows, where the quadratic scaling of standard attention really starts to pose a problem. At 4K context, the difference is modest. At 16K or 32K, it can mean the difference between normal interactive speeds and waiting several seconds per token. It won’t change your output quality at all as it’s mathematically equivalent to standard attention, just computed more efficiently. If you’re running any model with a context length above 4K, just turn it on. In most setups, it’s worth enabling, and you’ll likely see faster token generation and lower VRAM usage.

Model quantization determines the baseline

Not all Q4s are created equal

This one isn’t a setting as such, but before you even get to sampling parameters, the quantization level of the model itself sets the ceiling for output quality. Most local LLM users are running quantized models (Q4_K_M, Q5_K_M, Q6_K, Q8_0, and so on), and the naming convention can be confusing.

The primary number that you see simply refers to the bit-width. Q4 means 4 bits per weight, Q8 means 8. The letter suffix tells you about the quantization method. “K” means k-quants, which use a more sophisticated block-based approach that preserves quality better than the older “Q” methods. The final letter (S, M, L) indicates the size variant, small, medium, or large, which determines how many layers get a slightly higher precision treatment. Q4_K_M, for example, quantizes most layers to 4-bit but keeps some attention and output layers at a higher precision, while Q4_K_S applies 4-bit more aggressively across the board.

It’s also worth keeping an eye on NVFP4 and MXFP4, which are hardware-accelerated 4-bit formats supported natively on newer Nvidia GPUs (Blackwell and later). Unlike software-based k-quants, these run the 4-bit math directly on the tensor cores, so you get both the VRAM savings of Q4 and faster inference. Support in llama.cpp is still maturing, but if you’re on an RTX 50-series card, it’s worth experimenting with in the tools that can use it.

As a general rule, Q4_K_M is the sweet spot for most consumer setups, as it’s small enough to fit comfortably in VRAM while retaining most of the model’s capability. Q5_K_M and Q6_K offer incremental quality improvements if you have the headroom. Q8_0 is usually very close to full precision in practice but requires significantly more VRAM, and at that point you might be better off running a smaller model at full precision. A Q8 quantized 8B model will typically lose to an unquantized (or lightly quantized) 14B model on most benchmarks, even though they might use similar amounts of VRAM. At that stage, you’re trading the raw knowledge of a larger model for the greater precision of the less knowledgeable one, and it’s often better to just go for the larger model instead.

The key thing to understand is that quantization and sampling parameters interact. A heavily quantized model (Q3 or Q2) will benefit less from careful sampling tuning because the quality ceiling is already constrained by the quantization itself. The probability distributions from a Q2 model are noisier and less well-calibrated than from a Q6, so your min-p filter has worse data to work with. If you find yourself endlessly tweaking temperature and min-p without getting good results, the quantization level of your model might be the actual bottleneck.

It’s hard to get started

None of these settings are hidden, but they’re not obvious either if you’re just getting started. The defaults in most local LLM tools are conservative, being designed for broad compatibility rather than good output. Once you understand what each parameter does and how they interact with each other, you can tune the experience to your hardware and your use case.

The gap between default settings and a properly configured setup is bigger than most people expect. That’s what took my local setup from something I tolerated to something I actually prefer for a lot of my day-to-day work.